Experimental Research Design — 6 mistakes you should never make!

Since school days’ students perform scientific experiments that provide results that define and prove the laws and theorems in science. These experiments are laid on a strong foundation of experimental research designs.

An experimental research design helps researchers execute their research objectives with more clarity and transparency.

In this article, we will not only discuss the key aspects of experimental research designs but also the issues to avoid and problems to resolve while designing your research study.

Table of Contents

What Is Experimental Research Design?

Experimental research design is a framework of protocols and procedures created to conduct experimental research with a scientific approach using two sets of variables. Herein, the first set of variables acts as a constant, used to measure the differences of the second set. The best example of experimental research methods is quantitative research .

Experimental research helps a researcher gather the necessary data for making better research decisions and determining the facts of a research study.

When Can a Researcher Conduct Experimental Research?

A researcher can conduct experimental research in the following situations —

- When time is an important factor in establishing a relationship between the cause and effect.

- When there is an invariable or never-changing behavior between the cause and effect.

- Finally, when the researcher wishes to understand the importance of the cause and effect.

Importance of Experimental Research Design

To publish significant results, choosing a quality research design forms the foundation to build the research study. Moreover, effective research design helps establish quality decision-making procedures, structures the research to lead to easier data analysis, and addresses the main research question. Therefore, it is essential to cater undivided attention and time to create an experimental research design before beginning the practical experiment.

By creating a research design, a researcher is also giving oneself time to organize the research, set up relevant boundaries for the study, and increase the reliability of the results. Through all these efforts, one could also avoid inconclusive results. If any part of the research design is flawed, it will reflect on the quality of the results derived.

Types of Experimental Research Designs

Based on the methods used to collect data in experimental studies, the experimental research designs are of three primary types:

1. Pre-experimental Research Design

A research study could conduct pre-experimental research design when a group or many groups are under observation after implementing factors of cause and effect of the research. The pre-experimental design will help researchers understand whether further investigation is necessary for the groups under observation.

Pre-experimental research is of three types —

- One-shot Case Study Research Design

- One-group Pretest-posttest Research Design

- Static-group Comparison

2. True Experimental Research Design

A true experimental research design relies on statistical analysis to prove or disprove a researcher’s hypothesis. It is one of the most accurate forms of research because it provides specific scientific evidence. Furthermore, out of all the types of experimental designs, only a true experimental design can establish a cause-effect relationship within a group. However, in a true experiment, a researcher must satisfy these three factors —

- There is a control group that is not subjected to changes and an experimental group that will experience the changed variables

- A variable that can be manipulated by the researcher

- Random distribution of the variables

This type of experimental research is commonly observed in the physical sciences.

3. Quasi-experimental Research Design

The word “Quasi” means similarity. A quasi-experimental design is similar to a true experimental design. However, the difference between the two is the assignment of the control group. In this research design, an independent variable is manipulated, but the participants of a group are not randomly assigned. This type of research design is used in field settings where random assignment is either irrelevant or not required.

The classification of the research subjects, conditions, or groups determines the type of research design to be used.

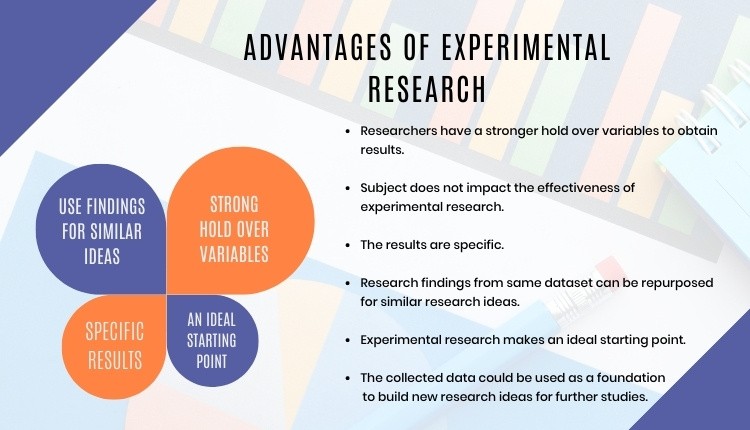

Advantages of Experimental Research

Experimental research allows you to test your idea in a controlled environment before taking the research to clinical trials. Moreover, it provides the best method to test your theory because of the following advantages:

- Researchers have firm control over variables to obtain results.

- The subject does not impact the effectiveness of experimental research. Anyone can implement it for research purposes.

- The results are specific.

- Post results analysis, research findings from the same dataset can be repurposed for similar research ideas.

- Researchers can identify the cause and effect of the hypothesis and further analyze this relationship to determine in-depth ideas.

- Experimental research makes an ideal starting point. The collected data could be used as a foundation to build new research ideas for further studies.

6 Mistakes to Avoid While Designing Your Research

There is no order to this list, and any one of these issues can seriously compromise the quality of your research. You could refer to the list as a checklist of what to avoid while designing your research.

1. Invalid Theoretical Framework

Usually, researchers miss out on checking if their hypothesis is logical to be tested. If your research design does not have basic assumptions or postulates, then it is fundamentally flawed and you need to rework on your research framework.

2. Inadequate Literature Study

Without a comprehensive research literature review , it is difficult to identify and fill the knowledge and information gaps. Furthermore, you need to clearly state how your research will contribute to the research field, either by adding value to the pertinent literature or challenging previous findings and assumptions.

3. Insufficient or Incorrect Statistical Analysis

Statistical results are one of the most trusted scientific evidence. The ultimate goal of a research experiment is to gain valid and sustainable evidence. Therefore, incorrect statistical analysis could affect the quality of any quantitative research.

4. Undefined Research Problem

This is one of the most basic aspects of research design. The research problem statement must be clear and to do that, you must set the framework for the development of research questions that address the core problems.

5. Research Limitations

Every study has some type of limitations . You should anticipate and incorporate those limitations into your conclusion, as well as the basic research design. Include a statement in your manuscript about any perceived limitations, and how you considered them while designing your experiment and drawing the conclusion.

6. Ethical Implications

The most important yet less talked about topic is the ethical issue. Your research design must include ways to minimize any risk for your participants and also address the research problem or question at hand. If you cannot manage the ethical norms along with your research study, your research objectives and validity could be questioned.

Experimental Research Design Example

In an experimental design, a researcher gathers plant samples and then randomly assigns half the samples to photosynthesize in sunlight and the other half to be kept in a dark box without sunlight, while controlling all the other variables (nutrients, water, soil, etc.)

By comparing their outcomes in biochemical tests, the researcher can confirm that the changes in the plants were due to the sunlight and not the other variables.

Experimental research is often the final form of a study conducted in the research process which is considered to provide conclusive and specific results. But it is not meant for every research. It involves a lot of resources, time, and money and is not easy to conduct, unless a foundation of research is built. Yet it is widely used in research institutes and commercial industries, for its most conclusive results in the scientific approach.

Have you worked on research designs? How was your experience creating an experimental design? What difficulties did you face? Do write to us or comment below and share your insights on experimental research designs!

Frequently Asked Questions

Randomization is important in an experimental research because it ensures unbiased results of the experiment. It also measures the cause-effect relationship on a particular group of interest.

Experimental research design lay the foundation of a research and structures the research to establish quality decision making process.

There are 3 types of experimental research designs. These are pre-experimental research design, true experimental research design, and quasi experimental research design.

The difference between an experimental and a quasi-experimental design are: 1. The assignment of the control group in quasi experimental research is non-random, unlike true experimental design, which is randomly assigned. 2. Experimental research group always has a control group; on the other hand, it may not be always present in quasi experimental research.

Experimental research establishes a cause-effect relationship by testing a theory or hypothesis using experimental groups or control variables. In contrast, descriptive research describes a study or a topic by defining the variables under it and answering the questions related to the same.

good and valuable

Very very good

Good presentation.

Rate this article Cancel Reply

Your email address will not be published.

Enago Academy's Most Popular Articles

- Promoting Research

Graphical Abstracts Vs. Infographics: Best practices for using visual illustrations for increased research impact

Dr. Sarah Chen stared at her computer screen, her eyes staring at her recently published…

- Publishing Research

10 Tips to Prevent Research Papers From Being Retracted

Research paper retractions represent a critical event in the scientific community. When a published article…

- Industry News

Google Releases 2024 Scholar Metrics, Evaluates Impact of Scholarly Articles

Google has released its 2024 Scholar Metrics, assessing scholarly articles from 2019 to 2023. This…

![experimental science advantages What is Academic Integrity and How to Uphold it [FREE CHECKLIST]](https://www.enago.com/academy/wp-content/uploads/2024/05/FeatureImages-59-210x136.png)

Ensuring Academic Integrity and Transparency in Academic Research: A comprehensive checklist for researchers

Academic integrity is the foundation upon which the credibility and value of scientific findings are…

- Reporting Research

How to Optimize Your Research Process: A step-by-step guide

For researchers across disciplines, the path to uncovering novel findings and insights is often filled…

Choosing the Right Analytical Approach: Thematic analysis vs. content analysis for…

Comparing Cross Sectional and Longitudinal Studies: 5 steps for choosing the right…

Sign-up to read more

Subscribe for free to get unrestricted access to all our resources on research writing and academic publishing including:

- 2000+ blog articles

- 50+ Webinars

- 10+ Expert podcasts

- 50+ Infographics

- 10+ Checklists

- Research Guides

We hate spam too. We promise to protect your privacy and never spam you.

- AI in Academia

- Career Corner

- Diversity and Inclusion

- Infographics

- Expert Video Library

- Other Resources

- Enago Learn

- Upcoming & On-Demand Webinars

- Open Access Week 2024

- Peer Review Week 2024

- Publication Integrity Week 2024

- Conference Videos

- Enago Report

- Journal Finder

- Enago Plagiarism & AI Grammar Check

- Editing Services

- Publication Support Services

- Research Impact

- Translation Services

- Publication solutions

- AI-Based Solutions

- Thought Leadership

- Call for Articles

- Call for Speakers

- Author Training

- Edit Profile

I am looking for Editing/ Proofreading services for my manuscript Tentative date of next journal submission:

Which among these would you prefer the most for improving research integrity?

7 Advantages and Disadvantages of Experimental Research

There are multiple ways to test and do research on new ideas, products, or theories. One of these ways is by experimental research. This is when the researcher has complete control over one set of the variable, and manipulates the others. A good example of this is pharmaceutical research. They will administer the new drug to one group of subjects, and not to the other, while monitoring them both. This way, they can tell the true effects of the drug by comparing them to people who are not taking it. With this type of research design, only one variable can be tested, which may make it more time consuming and open to error. However, if done properly, it is known as one of the most efficient and accurate ways to reach a conclusion. There are other things that go into the decision of whether or not to use experimental research, some bad and some good, let’s take a look at both of these.

The Advantages of Experimental Research

1. A High Level Of Control With experimental research groups, the people conducting the research have a very high level of control over their variables. By isolating and determining what they are looking for, they have a great advantage in finding accurate results.

2. Can Span Across Nearly All Fields Of Research Another great benefit of this type of research design is that it can be used in many different types of situations. Just like pharmaceutical companies can utilize it, so can teachers who want to test a new method of teaching. It is a basic, but efficient type of research.

3. Clear Cut Conclusions Since there is such a high level of control, and only one specific variable is being tested at a time, the results are much more relevant than some other forms of research. You can clearly see the success, failure, of effects when analyzing the data collected.

4. Many Variations Can Be Utilized There is a very wide variety of this type of research. Each can provide different benefits, depending on what is being explored. The investigator has the ability to tailor make the experiment for their own unique situation, while still remaining in the validity of the experimental research design.

The Disadvantages of Experimental Research

1. Largely Subject To Human Errors Just like anything, errors can occur. This is especially true when it comes to research and experiments. Any form of error, whether a systematic (error with the experiment) or random error (uncontrolled or unpredictable), or human errors such as revealing who the control group is, they can all completely destroy the validity of the experiment.

2. Can Create Artificial Situations By having such deep control over the variables being tested, it is very possible that the data can be skewed or corrupted to fit whatever outcome the researcher needs. This is especially true if it is being done for a business or market study.

3. Can Take An Extensive Amount of Time To Do Full Research With experimental testing individual experiments have to be done in order to fully research each variable. This can cause the testing to take a very long amount of time and use a large amount of resources and finances. These costs could transfer onto the company, which could inflate costs for consumers.

Important Facts About Experimental Research

- Experimental Research is most used in medical ways, with animals.

- Every single new medicine or drug is testing using this research design.

- There are countless variations of experimental research, including: probability, sequential, snowball, and quota.

You Might Also Like

Recent posts.

- Only Child Characteristics

- Does Music Affect Your Mood

- Negative Motivation

- Positive Motivation

- External and Internal Locus of Control

- How To Leave An Emotionally Abusive Relationship

- The Ability To Move Things With Your Mind

- How To Tell Is Someone Is Lying About Cheating

- Interpersonal Attraction Definition

- Napoleon Compex Symptoms

IMAGES

VIDEO